AI Voice Tech is Getting Wild: OpenAI's Latest Breakthrough in Voice Assistants

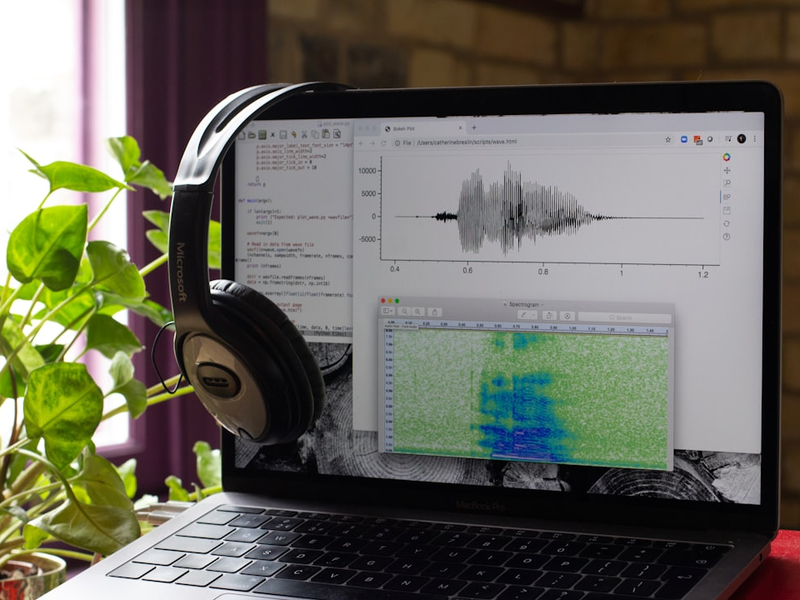

Photo by Catherine Breslin on Unsplash

OpenAI just dropped a game-changing voice AI model that could revolutionize how businesses interact with customers. Their new “gpt-realtime” model promises more natural, expressive conversations that go beyond basic voice interactions.

The new technology allows AI systems to understand complex vocal instructions, switch languages mid-sentence, and even catch non-verbal cues like laughs and sighs. Imagine calling customer service and having an AI assistant that sounds remarkably human - that’s the future OpenAI is building.

Customers like T-Mobile and Zillow are already testing the technology, showcasing potential use cases from helping customers find phones to narrowing down neighborhood searches. The model boasts impressive accuracy, scoring 82.8% on benchmarking tests compared to previous versions.

What makes gpt-realtime truly exciting is its advanced instruction-following capabilities. The AI can now understand nuanced commands like “speak emphatically in a French accent” - a significant leap from traditional voice assistants.

OpenAI has also made the technology more accessible by reducing prices by 20%, with audio input tokens now costing $32 per million and output tokens at $64. The Realtime API now supports additional features like recognizing image inputs and handling Session Initiation Protocol (SIP), which could open up even more enterprise applications.

While competitors like ElevenLabs and Soundhound are also developing voice AI technologies, OpenAI’s approach seems focused on creating more emotionally intelligent and context-aware systems.

As AI continues to evolve, we’re witnessing a transformative moment in how technology understands and communicates with humans. OpenAI’s gpt-realtime isn’t just another voice assistant - it’s a glimpse into a future where AI conversations feel increasingly natural and nuanced.

AUTHOR: mei

SOURCE: VentureBeat