Google's New AI Trick Could Change Everything (and Mess With Big Tech's Plans)

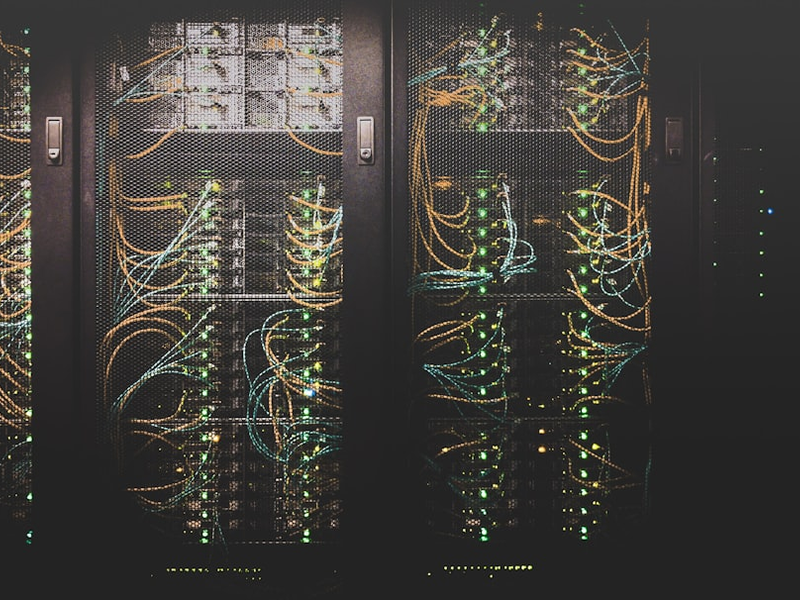

Photo by Taylor Vick on Unsplash

Remember when everyone was freaking out about needing to build a million new data centers to power AI? Yeah, turns out we might not need them after all, and that’s going to be a huge problem for some very powerful companies.

Google just dropped something called TurboQuant, a new compression algorithm that basically makes AI models use way less memory. We’re talking about shrinking an AI’s memory footprint by six times. That’s not just a minor tweak; that’s the kind of breakthrough that changes how we think about AI infrastructure.

Here’s why this matters to you: smaller AI models mean less energy consumption, which means your phone could actually run a genuinely powerful AI assistant without draining the battery in five minutes. It also means we don’t need to scramble to build all these massive data centers that nobody wants in their backyard anyway.

But here’s where it gets interesting from a political economy perspective. For the past few years, the entire tech stock market has been basically betting on one thing: that we’d need endless expansion of data centers, which would require endless purchases of chips from NVIDIA. That assumption has been printing money for basically every major tech company. And now TurboQuant is threatening to puncture that whole narrative.

The infrastructure buildout everyone promised is already running into serious problems. We’re talking about power generation issues, local government resistance, and the fact that communities across America, including organizations like the NAACP, are pushing back against these massive energy-hungry facilities in their neighborhoods. It turns out building a bunch of new data centers is actually pretty hard when people don’t want them.

So what happens when you combine technological breakthroughs that make AI more efficient with real-world barriers to building new infrastructure? Necessity becomes the mother of invention. Companies figure out how to do more with less, which is exactly what TurboQuant does.

The algorithm works by compressing the parts of AI models that are the most memory-hungry, specifically something called the key-value cache, which stores frequently used information. By using some clever mathematical tricks, TurboQuant makes these data grabs faster and less resource-intensive.

The broader pattern here is worth paying attention to. Compression technology has been quietly revolutionizing how we use computers forever, from ZIP files to video streaming. Now it’s AI’s turn. The result could be genuinely transformative: more powerful AI running on your devices instead of massive corporate servers, or it could fundamentally reshape how the AI economy works at scale.

Either way, we’re looking at a future where bigger isn’t automatically better, and that’s going to make a lot of people’s business plans look a lot less profitable.

AUTHOR: tgc

SOURCE: Mashable