San Francisco's Anthropic Is Taking On The Federal Government Over AI Safety Restrictions

Photo by UK Prime Minister | License

San Francisco-based AI company Anthropic just filed a major lawsuit against the U.S. Department of War and 16 other federal agencies, and honestly, the drama is wild. The company is challenging its designation as a “supply chain risk”, a label that’s basically a death sentence for government contracts and could tank their relationships with private customers too.

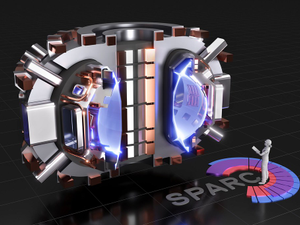

Here’s what went down: Anthropic, the genius minds behind the popular Claude chatbot, has been working with the federal government since November 2024. The company built its entire business around the idea that AI should have guardrails. Their usage policy explicitly prevents customers, including the government, from using their technology for lethal autonomous warfare or mass surveillance of Americans. It’s a stance the company takes seriously, rooted in their whole thing about developing AI safely.

But then in September 2025, the Department of War basically said “nah, we want unlimited access”. The two sides negotiated for months, with Anthropic offering compromises, but refusing to budge on those two key restrictions. Anthropic argued, reasonably, that the current AI technology just isn’t safe enough for those applications yet.

Then things got political. On February 24, Secretary of War Pete Hegseth gave Anthropic an ultimatum: drop the restrictions by 5 p.m. on February 27, or face being branded a supply chain risk. Four days later, just before the U.S. and Israel launched a bombing campaign against Tehran, President Trump posted a social media tirade calling Anthropic a “Radical Left” company with “Leftwing nut jobs” as employees. He ordered every federal agency to immediately stop using their technology.

That same evening, Hegseth officially designated Anthropic as a supply chain risk and banned any military contractors from working with them. But here’s where it gets absurd: he also said Anthropic would continue providing services for up to six months. So the company is simultaneously a grave national security threat and too essential to national security to immediately cut off.

Anthropic’s lawyers are arguing this is straight-up retaliation for the company’s public stance on AI safety, violating their First Amendment rights. They’re also saying the government violated due process by stripping away the company’s property and contracts without proper legal procedure.

The case landed in the lap of U.S. District Judge Rita Lin in San Francisco, and Anthropic is pushing hard for an emergency hearing to freeze everything while the lawsuit plays out. Even employees from Anthropic and OpenAI are lining up to file “friend of the court” briefs supporting the company.

This whole situation is basically a test of whether the government can use national security designations as a weapon against companies that refuse to compromise their values. Spoiler alert: it’s gonna get messy.

AUTHOR: cgp

SOURCE: Local News Matters