Your Mac Can Now Run AI Models Faster. Here's What That Means for You

Photo by Mattruffoni | License

If you’ve been curious about running artificial intelligence models directly on your computer instead of relying on expensive cloud services, there’s some genuinely exciting news. Ollama, a tool that lets you run large language models locally, just got a significant speed boost for Mac users, and it’s opening up new possibilities for folks tired of subscription fees and rate limits.

The update brings support for Apple’s MLX framework, which is specifically designed to make machine learning run efficiently on Mac’s Apple Silicon chips like the M1 and newer. On top of that, Ollama added improved caching performance and support for Nvidia’s NVFP4 format, a compression technique that lets models use way less memory without sacrificing quality.

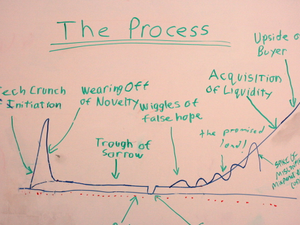

Why should you care? Well, as more people discover the frustrations of hitting rate limits on ChatGPT or paying premium prices for Claude Code, running models locally on your own hardware is becoming genuinely appealing. You don’t have to worry about OpenAI or Anthropic throttling your usage or jacking up subscription costs. You own the whole process.

The timing is perfect, honestly. Open source projects like OpenClaw have exploded in popularity, with hundreds of thousands of developers experimenting with running their own models. What was once purely the domain of researchers and hardcore hobbyists is becoming mainstream, and these performance improvements make it way more practical for regular people.

Developers in particular are getting excited about local coding models. If you’re someone who codes and uses AI tools constantly, those rate limits and high subscription costs really add up. Local models mean unlimited usage for the price of your computer’s electricity.

There’s a catch though, the new MLX support is still in preview, and right now it only works with one model: Alibaba’s Qwen3.5 with 35 billion parameters. You’ll also need serious hardware. You need a Mac with Apple Silicon, sure, but you’re looking at needing at least 32GB of RAM to make this work smoothly. That’s not exactly budget territory, but it’s cheaper than paying for premium AI subscriptions year after year.

Ollama also recently expanded its integration with Visual Studio Code, making it easier for developers to plug local models directly into their workflow.

The bigger picture here is that the barrier to entry for running sophisticated AI locally keeps getting lower. These kinds of incremental improvements, better caching, optimized frameworks, compression formats, are steadily making it more realistic for everyday users with decent hardware to have their own AI capabilities without corporate intermediaries.

If you’ve got a newer Mac with decent specs and you’re interested in taking the plunge, now’s actually a pretty good time to experiment.

AUTHOR: mp

SOURCE: Ars Technica