California Schools Are Figuring Out What to Actually Do With AI. And It's Complicated

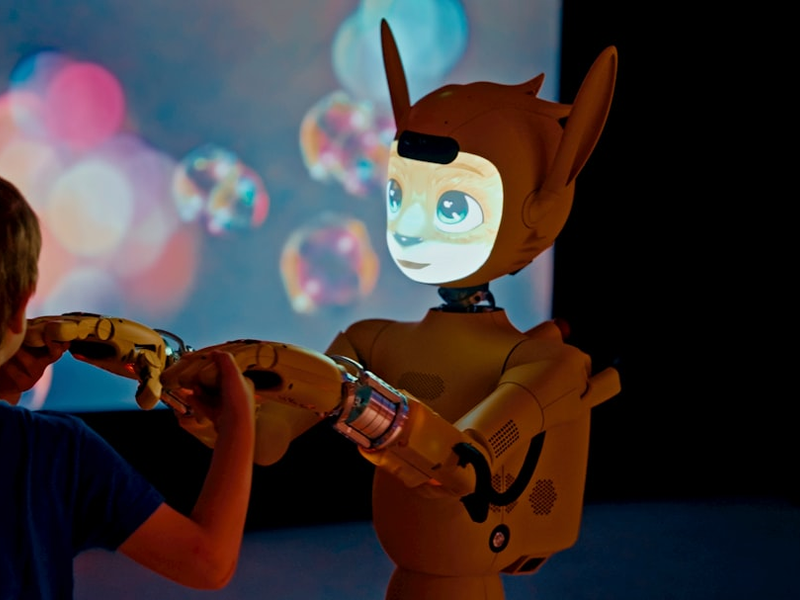

Photo by Enchanted Tools on Unsplash

California school districts are at a crossroads. As artificial intelligence tools become increasingly common in classrooms, educators are grappling with a fundamental question: should we be using this technology at all, and if so, how do we do it responsibly?

The stakes feel real. On one hand, AI promises to lighten teachers’ workloads and personalize learning for students. On the other hand, critics worry about threats to critical thinking, student privacy, and algorithmic bias. It’s not a problem with an easy answer, which is exactly why districts like ABC Unified in the greater Los Angeles area are taking their time to figure it out.

When Mike Lawrence became director of information and technology at ABC Unified two years ago, he inherited some initial AI guidelines. But he didn’t just implement them from the top down. Instead, he posted a draft document online, invited feedback from the community, and started hosting quarterly roundtable discussions about AI in education. The district now uses Google’s Gemini for students in grades 7-12, while blocking ChatGPT entirely. They’ve also created a “transparency badge” system that labels whether AI was used in documents, emails, and even student work.

It’s this kind of thoughtful, community-driven approach that experts say is missing from much of the conversation around AI in schools. Rebecca Winthrop, director of the Center for Universal Education at the Brookings Institution, found that families have been largely shut out of these discussions, despite their importance. Her team’s research suggests that the potential risks of AI use among children currently outweigh the benefits.

Stephen Aguilar, an associate professor at USC’s Rossier School of Education, argues we need to stop getting excited about AI as some revolutionary force and start treating it like what it actually is: a set of tools that may or may not solve specific problems. Before adopting any AI tool, educators should ask themselves hard questions about what mistakes they’re willing to accept in service of their goals.

The cautionary tale comes from Los Angeles Unified, which rolled out a chatbot called Ed in 2024 as a “personal assistant” for students. The rollout was splashy, ambitious, and ultimately failed within three months when the company that helped develop it fell apart. That move-fast-and-break-things mentality can damage trust and waste resources.

Lawrence recognizes that progress on this issue requires hearing from skeptics, not just AI enthusiasts. He’s actively seeking feedback from teachers who avoid using AI entirely, including history teachers who want students to disconnect completely. That kind of listening, without immediately defending current choices, is exactly what thoughtful AI implementation should look like.

As Lawrence puts it, this is a journey, not a one-time decision. Schools across California are learning that getting AI right in education means slowing down, involving the entire community, and staying humble about what these tools can and cannot do.

AUTHOR: mb

SOURCE: Local News Matters